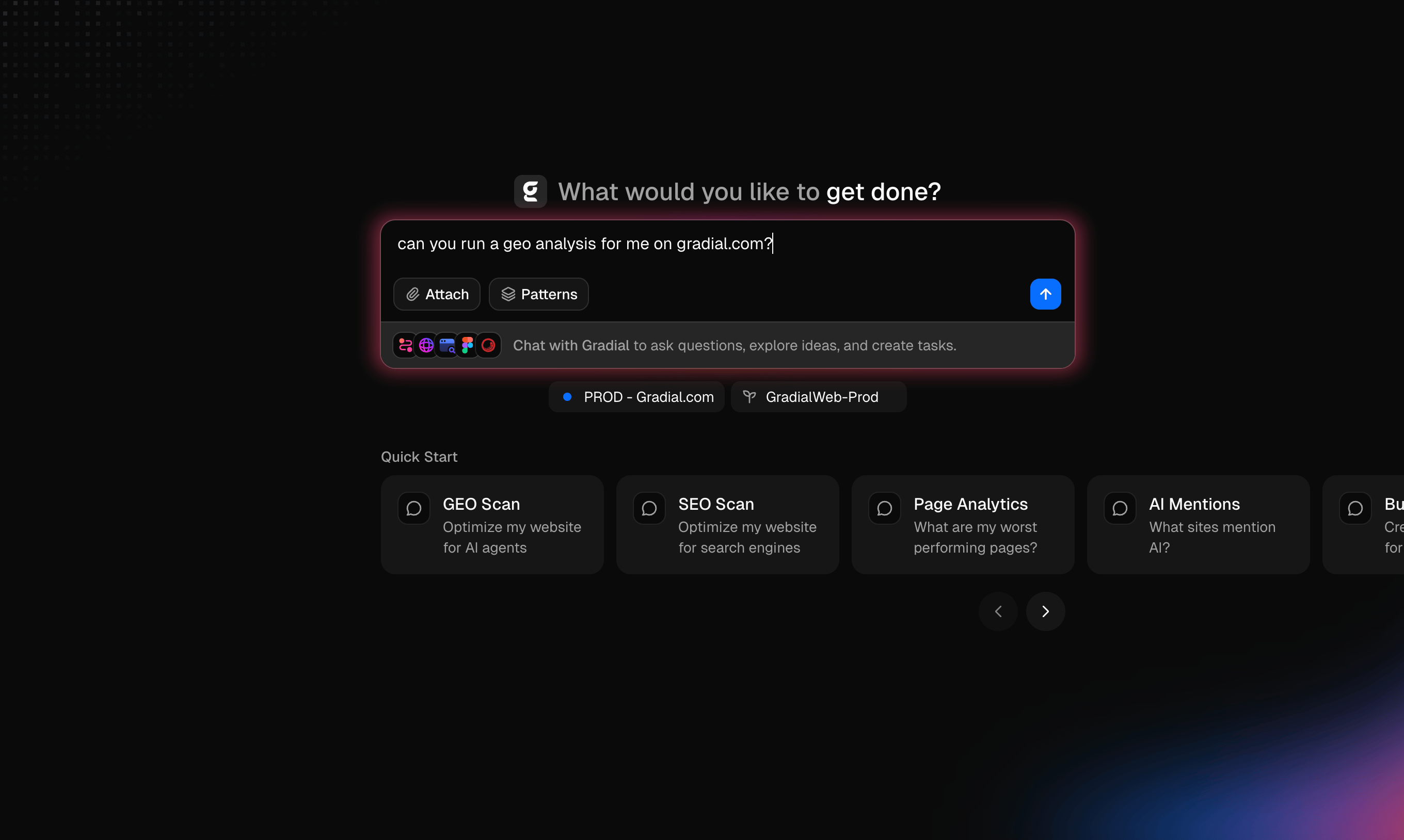

Gradial just launched a GEO Agent. Here's how it compares to the visibility tools like Profound, and why we think the category has been missing something important.

If you've been shopping for a GEO or AEO tool lately, you've seen no shortage of impressive platforms.

The category is fast-growing and increasingly crowded. Some of these tools have raised hundreds of millions of dollars, earned "category leader" designations, and built genuinely sharp marketing around the promise of AI search visibility. The data they surface, hundreds of millions of daily prompts tracked across ChatGPT, Gemini, Perplexity, and others, is real, and it's valuable.

We're not here to tell you those tools are bad. They aren't. We're here to ask a question that we think the GEO category has been quietly avoiding: after you get the report, who actually does the work?

That question has gone unanswered since SEO visibility tools took hold in the mid 2000s. Back then, nobody even knew to ask the question. We just accepted the insights and were totally okay with being given more work to do.